XAI: Explainable Artificial Intelligence

Nov. 21, 2017. New York Times The Full Paper, by Cliff Kuangnov

Can A.I. Be Taught to Explain Itself?

As machine learning becomes more powerful, the field’s researchers increasingly find themselves unable to account for what their algorithms know — or how they know it.

This paper does'nt deal with Art, but artists and art lovers will find there stimulating idea, important scientific facts and even some political considerations.

If you are a hard worker and wish to know more, read the PDF presentationof the Darpa XIA project. You can also appreciate these some comments. Note that the European AI community seems absent from the field. But, even in the US, the XIA concept seems presently only a Darpa initiative. *

About the European directive, get a paper by Bryce Goodman.

On a similar topic (understanding neural networks).

Our forward thinking about this topic.

1. Technical construction of XIA

XAI implies the reconciliation, or combination, of two different ways of "thinking" (as far as the word applies as well to machines as to animals and humans): "intuitive" and "rational".

Note thar

- There are two ways of doing mathematics. The opposition is sometimes termed

as "geometry Vs. algebra". We have simple examples with two demonstrations

of the Pythagoras theorem. See for example an

interview with Alain Connes. Or the famed dispute between two mathematicians

(Zarisky Weil ?) about geometry. .

- There are two ways of doing AI. The Gofai (Good Old Fashioned AI), based on

logic (mainly 1st order) and the new one based on neural networks, big data

and the Cloud.

- Life has two ways of transsmitting heredity: algebraic (genome, crossover) and geometric (strucure of the embryo).

- Justice has two ways of making a ruling: formally apply the text of the Law, intimate conviction of the judge (with case law somewhere in the middle).

- We could somehow apply here the dialectics proposed by Ianis Lallemand between specifications and matter (extended to the "public" as a kind of "matter").

This oppostion could help us understant the XAI issue and the way to solve it. We can start from the Aristotlean sentence : man is a rational animal.

We can associate

- the animal facet with the intuition, using the body for perception, expression

(and action). We share these functions with all the animals (let's say mainly

the most advanced ones). They could be associated with neural networks, so more

so if they are embedded in material devices and particularly in robots.

- the rational facet with the reasoning, logic. We have (partly) implemented

these functions in computers as Turing machines

XAI aims to combine these two modes. How. That's summarly explained in the

Gunning paper. To bridge the gap, the core issue is to relate neural nodes to

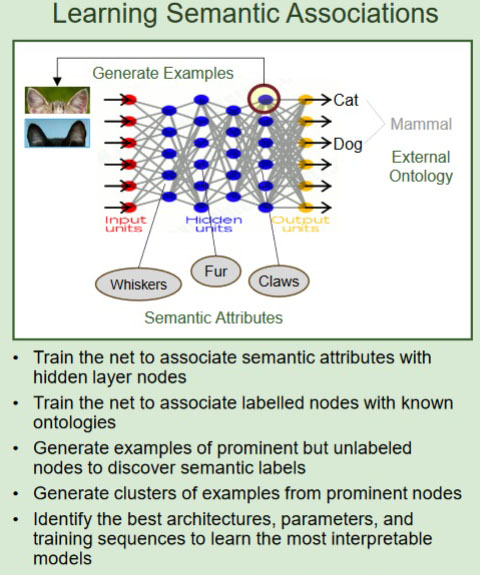

concepts. Gunning (see picture) says:

- train the net to associate semantic attributes with hidden layer nodes. That

implies that the net is no longer seen as a non-structured array of neurons,

but that there is some relation between the world's semantic and the nodes structure;

- train the net to associate labelled nodes with known ontologies. Here the

connection is made not all nodes are labelled, then

- tnerate examplex of prominent but unlabeled nodes to discovder semantic labels.

As says [Chen] "One of the challenges is to bridghe the gap between low-level features and high-level events".

Note that images take their part in the process. (Reference to Chatonsky AIM, Artificial imagination). See for instance the Google led Distill website.

Shortly, the issue is to reproduce the kind of work done by our brain to get concepts from the flow of stimuli sent by the retinas and ears. A process that humans spend years to learn. And which of course, is never finished. We learned up to our last moments of life.

2. Ethical aspects of XAI

To say that the AI must explain its decisions (and possibly its actions if

embedded in some machine, and especially drones (UAV, unmanned aerial vehicle)

is to say that it is "responsible".

In case of mischief, a judge (or other appropriate authority) can decide if

a pain can be positive in the learning process, or if the machines must be destroyed

(killed) or authoritatively modified.

3. Economic and politic issues of XAI

These considrations are far from abstract. In may 2018, the European Union's General Data Protection Regulation foes into effect.

Americans, like them or not, have the merit of lauching beautiful projects

and support them with $ millions or billions. They do'nt get lost in preconditions,

speculations and linguistico-dogmatic bla-bla (Deleuze, Damasio, or even Chatonsky

on his poor days).

On the other hand, America, since the 1980's have let go the basis of their

economic ethics, specially antitrust law. An Europe only has the guts to lauch

a law about "explainability", with huge political issus as well as

technical.